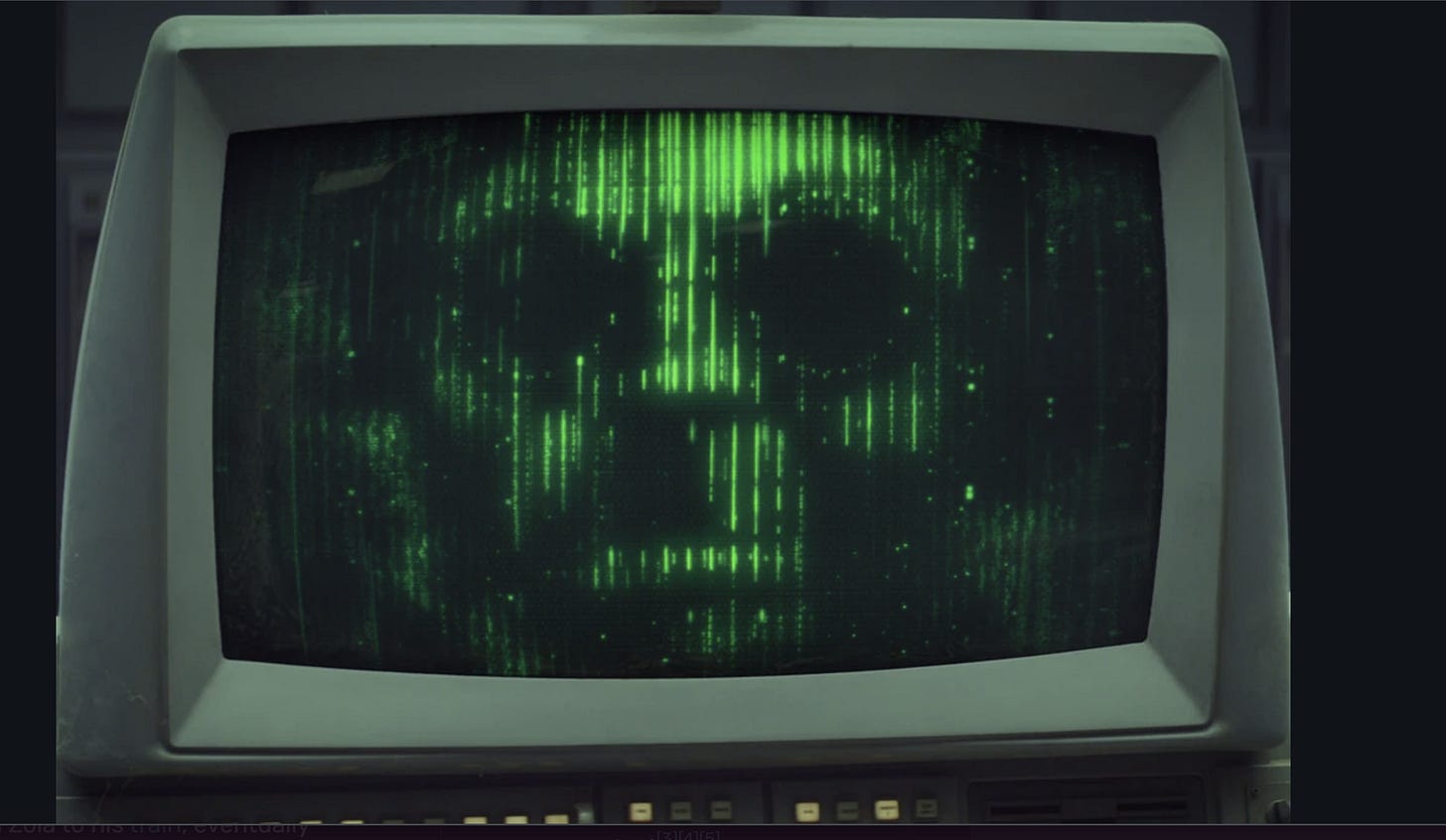

DOD sought to weaponize AI against Americans

MesoscaleNews exists to explain the forces shaping our future — from climate systems to political systems to the rapidly accelerating world of artificial intelligence.

Reporting like this takes time and research, and it’s only possible because readers support the work directly.

If you want more independent investigations like this, please consider upgradi…